If we define "AI problem" as an obstacle to maximizing the benefits of Artificial Intelligence, it is clear that there are a number of these, ranging from the technical and practical to the ethical and cultural. As we say goodbye to 2020, I think that we may look back on it in, a few years' time, as the year in which some of the most serious AI problems emerged into the mainstream of public discourse. However, there is one very troubling gap in this growing awareness of AI problems, a seldom discussed problem that I present below.

Growing Doubts About AI?

As one data science publication put it, 2020 was: "marked by ethical issues of AI going mainstream, including, but not limited to, gender/race bias, police and military use, face recognition, surveillance, and deep fakes." — The State of AI in 2020.

One of the most widely discussed indicators of problems in AI in 2020 was the “Timnit Gebru incident” (More than 1,200 Google workers condemn firing of AI scientist Timnit Gebru). This seems to be a debacle of Google’s own making, but it surfaced issues of AI bias, AI accountability, erosion of privacy, and environmental impact.

As we enter 2021, bias seems to be the AI problem that is “enjoying” the widest awareness. A quick Google search for ai bias produces 139 million results, of which more than 300,000 appear as News. However, 2020 also brought growing concerns about attacks on the way AI systems work, and the ways in which AI can be used to commit harm, notably the "Malicious Uses and Abuses of Artificial Intelligence," produced by Trend Micro Research in conjunction with the United Nations Interregional Crime and Justice Research Institute (UNICRI) and Europol’s European Cybercrime Centre (EC3).

Thankfully, awareness of AI problems was much in evidence at the "The Global AI Summit," an online "think-in" that I attended last month. The event was organized by Tortoise Media and some frank discussion of AI problems occurred after the presentation of highlights from the heavily researched and data rich Global AI Index. Unfortunately, the AI problem that troubles me the most was not on the agenda (it was also absent from the Trend/UN report).

AI's Chip and Code Problem

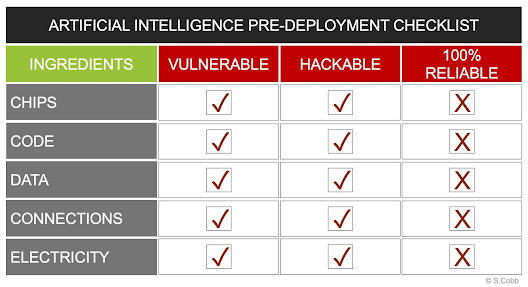

The stark reality, obscured by the hype around AI, is this: all implementations of AI are vulnerable to attacks on the hardware and software that run them. At the heart of every AI beats one or more CPUs running an operating system and applications. As someone who has spent decades studying and dealing with vulnerabilities in, and abuse of, chips and code, this is the AI problem that worries me the most:

AI RUNS ON CHIPS AND CODE, BOTH OF WHICH ARE VULNERABLE TO ABUSE

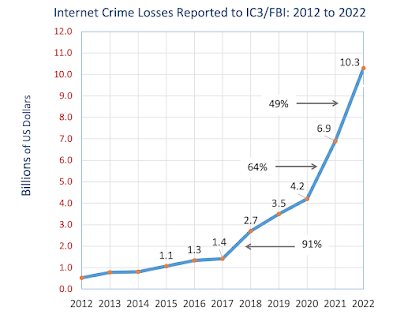

In the last 10 years we have seen successful attacks on the hardware and software at the heart of mission critical information systems in hundreds of prestigious entities both commercial and governmental. The roll call of organizations and technologies that have proven vulnerable to abuse includes the CIA, NSA, DHS, NASA, Intel, Cisco, Microsoft, Fireye, Linux, SS7, and AWS.

Yet despite a constant litany of new chip and code vulnerabilities, and wave after wave of cybercrime and systemic intrusions by nation states—some of which go undetected for months, even years—a constantly growing chorus of AI pundits persists in heralding imminent human reliance on AI systems as though it was an unequivocally good thing.

Such "AI boosterism" keeps building, seemingly regardless of the large body of compelling evidence that supports this statement: no builder or operator of any computer system, including those that run AI, can guarantee that it will not be abused, misused, impaired, corrupted, or commandeered through unauthorized access or changes to its chips and code.

And this AI problem is even more more serious when you consider it is the one about which meaningful awareness seems to be lowest. Frankly, I've been amazed at how infrequently this underlying vulnerability of AI is publicly mentioned, noted, or addressed, where publicly means: "discoverable by me using Google and asking around in AI circles."

Of course, AI enthusiasts are not alone in assuming that, by the time their favorite technology is fully deployed, it will be magically immune to the chip-and-code vulnerabilities inherent in computing systems. Fans of space exploration are prone to similar assumptions. (Here's a suggestion for any journalists reading this: the next time you interview Elon Musk, ask him what kind of malware protection will be in place when he rides the SpaceX Starship to Mars.)

Boosters of every new technology — pun intended— seem destined to assume that the near future holds easy fixes for whatever downsides skeptics of that technology point out. Mankind has a habit of saying "we can fix that" but not actually fixing it, from the air-poisoning pollution of fossil fuels to ocean-clogging plastic waste. (I bet Mr. Musk sees no insurmountable problems with adding thousands of satellites to the Earth's growing shroud of space clutter.)

I'm not sure if I'm the first person to say that the path to progress is paved with assumptions, but I'm pretty sure it's true. I would also observe that many new technologies arrive wearing a veil of assumptions. This is evident when people present AI as so virtuous and beneficent that it would be downright churlish and immodest of anyone to question the vulnerability of their enabling technology.

The Ethics of AI Boosterism

One question I kept coming back to in 2020 was this: how does one avert the giddy rush to deploy AI systems for critical missions before they can be adequately protected from abuse? While I am prepared to engage in more detailed discussions about the validity of my concerns, I do worry that these will get bogged down in technicalities of which there is limited understanding among the general public.

However, as 2020 progressed and "the ethics of AI" began to enjoy long-overdue public attention, another way of breaking through the veil of assumptions obscuring AI's inherent technical vulnerability occurred to me. Why not question the ethics of "AI boosterism"? I mean, surely we can all agree that advocating development and adoption of AI without adequately disclosing its limitations raises ethical questions.

Consider this statement: as AI improves, doctors will be able to rely upon AI systems for faster diagnosis of more and more diseases. How ethical is it to say that, given what we know about how vulnerable AI systems will be if the hardware and software on which they run is not significantly more secure than what we have available today?

To be ethical, any pitches for AI backing and adoption should come with a qualifier, something like "provided that the current limitations of the enabling technology can be overcome." For example, I would argue that the earlier statement about medical use of AI would not be ethical unless it was worded something like this: as AI improves, and if the current limitations of the enabling technology can be overcome, doctors will be able to rely upon AI systems for faster diagnosis of more and more diseases.

Unlikely? Far-fetched? Never going to happen? I am optimistic that the correct answer is no. But I invite doubters to imagine for just a moment how much better things might have gone, how much better we might feel about digital technology today, if previous innovations had come with a clear up-front warning about their potential for abuse.

|

| 40 digital technologies open to abuse |

A few months ago, to help us all think about this, I wrote "

A Brief History of Digital Technology Abuse." The article title refers to "40 chapters" but these are only chapter headings that match the 40 items in this word cloud. I invite you to check it out.

In a few weeks I will have some statistics to share about the general public's awareness of AI problems. I will be sure to provide a link here. (See: AI problem awareness grew in 2020, but 46% still “not aware at all” of problems with artificial intelligence.)

In the meantime, I would love to hear from anyone about their work, or anyone else's, on the problem of defending systems that run AI against abuse. (Use the Comments or the contact form at the top of the page, or DM @zobb on Twitter.)

Notes:

If you found this article interesting or helpful or both, please consider clicking the button below to buy me a coffee and support a good cause, while fueling more independent research and ad-free content like this. Thanks!